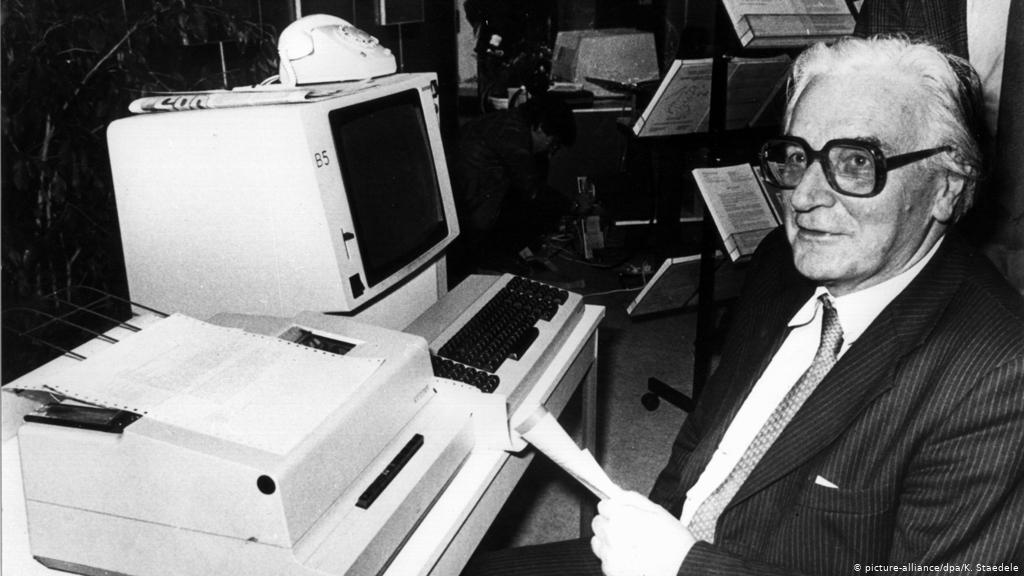

Konrad Zuse

Konrad Zuse was a German civil engineer, pioneering computer scientist, inventor and businessman. His greatest achievement was the world’s first programmable computer; the functional program-controlled Turing-complete Z3 became operational in May 1941.

Born: 22 June 1910, Berlin, Germany

Died: 18 December 1995, Hünfeld, Germany

Spouse: Gisela Brandes (m. 1945–1995)

Children: Horst Zuse, Klaus Peter Zuse, Monika Zuse Gruden, Friedrich Zuse, more

Awards: Werner von Siemens Ring, Wilhelm Exner Medal, Harry H. Goode Memorial Award

Konrad Zuse

Konrad Zuse was born on 22 June, 1910, in Berlin (Wilmersdorf), the capital of Germany, in the family of a Prussian postal officer—Emil Wilhelm Albert Zuse (26.04.1873-14.05.1946) and Maria Crohn Zuse (10.01.1882-02.07.1957). Konrad had a sister, two years older Lieselotte (1908-1953).https://tpc.googlesyndication.com/safeframe/1-0-37/html/container.html

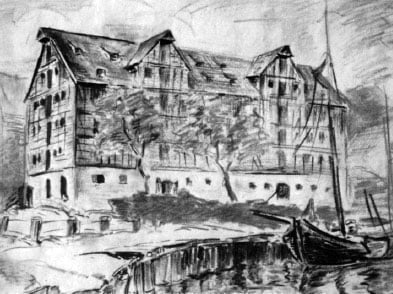

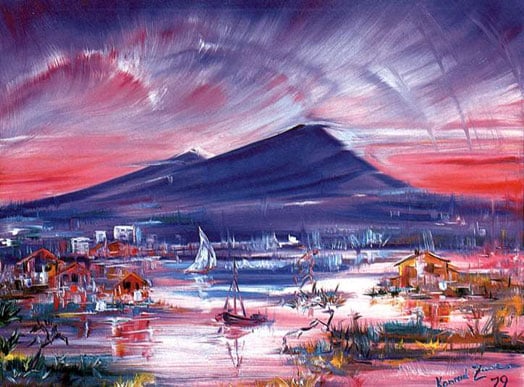

In 1912, the Zuse family leaved for Braunsberg, a sleepy small town in east Prussia, where Emil Zuse was appointed a postal clerk. From his early childhood Konrad started to demonstrate a huge talent, but not in mathematics, or engineering, but in painting (look at the fabulous chalk drawing nearby, made by Zuse in his school-time).

Konrad went too young to the school and enrolled the humanistic Gymnasium Hosianum in Braunsberg. After his family moved to Hoyerswerda (Hoyerswerda is a town in the German Bundesland of Saxony), he passed his Abitur (abitur is the word commonly used in Germany for the final exams young adults take at the end of their secondary education) at Reform-Real-Gymnasium in Hoyerswerda. After the graduation the young Konrad fall in a state of uncertainty, what to study later—engineering or painting. The film Metropolis of Fritz Lang from 1927 impressed pretty much Konrad. He dreamed to design and build a giant and impressive futuristic city as Metropolis and even started to draw some projects. So finally he decided to study civil engineering at the Technical College (Technischen Hochschule) in Berlin-Charlottenburg.nullADVERTISINGnullnull

During his study he worked also as bricklayer and bridge builder. During this time the traffic lights were introduced into Berlin, causing a total chaos in the traffic. Zuse was one of the first people, who tried to design something like a “green wave”, but unsuccessful. He was also very interested in the field of photography, and designed an automated systems for development of band negatives, using punch cards as accompanying maps for control purposes. Later on he devised a special system for film projections, so called Elliptisches Kino.

The next major project of the young dreamer was the conquest of space. He dreamed to build bases on the moons of the outer planets of Solar System. In this bases will be built a fleet of rockets, each with a hundred or two hundred people passengers, capable to fly with a speed one-thousandth the speed of light, so to reach the nearest fixed star for thousand years.nullADVERTISINGnullnull

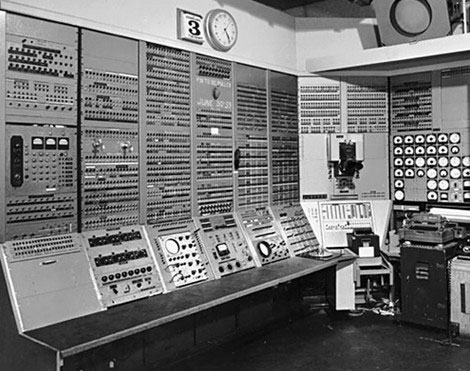

The future city Metropolis, the automatic photo lab, the elliptical cinema, the space project—all this is only a small part of the technical ideas, preparing the invention of the computer. After the graduation from Technischen Hochschule in 1935, he started as a design engineer at the Henschel Flugzeugwerke (Henschel aircraft factory) in Berlin-Schönefeld, but resigned a year later, deciding to devote entirely to the construction of a computer. From 1935 till 1964 Zuse was almost entirely devoted to the development of the first relays computer in the world, the first workable programmable computer in the world (see computers of Zuse), the first high-level computer language in the world, etc.

In January 1945 Konrad Zuse married to one of his employees—Gisela Ruth Brandes. On November, 17, the same year was born their first son—Horst, which will follow his eminent father and will get a diploma degree in electrical engineering and a Ph.D. degree in computer science. Later on were born Monika (1947-1988), Ernst Friedrich (1950-1979), Hannelore Birgit (1957) and Klaus-Peter (1961).https://tpc.googlesyndication.com/safeframe/1-0-37/html/container.html

After 1964, the Zuse KG was no longer owned and controlled by Konrad Zuse. It was a heavy blow for Zuse to loose his company, but the active debts were too high. In 1967 he received another blow, because the German patent court rejected his patent applications and Zuse lost his 26 year fight about the invention of the Z3 with all its new features (click here, to see the Zuse’s first patent application from 1941).

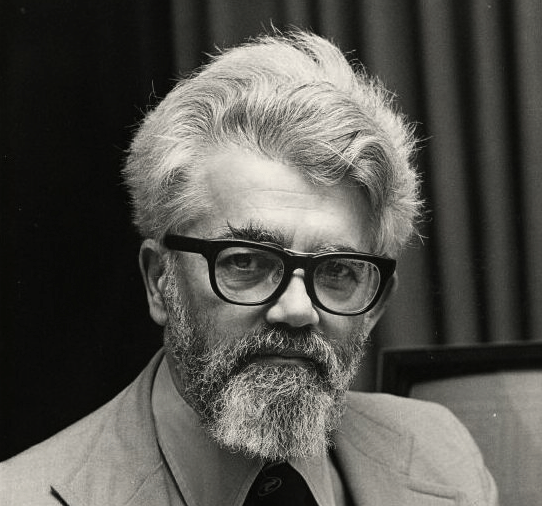

An oil painting from Konrad Zuse (1979) (Source: http://www.epemag.com/zuse)

But in 1960s the retired Zuse was still a man, full of energy and ideas. He started to write an autobiography (published in 1970), made a lot of beautiful oil paintings (see the upper image), reconstructed his first computer (Z1), etc. In 1965, he was given the Werner von Siemens Award in Germany, which is the most prestigious technical award in Germany. In the same 1965 Zuse received the Harry Goode Memorial Award together with George Stibitz in Las Vegas.

In 1969 Zuse published Rechnender Raum, the first book on digital physics. He proposed that the universe is being computed by some sort of cellular automaton or other discrete computing machinery, challenging the long-held view that some physical laws are continuous by nature. He focused on cellular automata as a possible substrate of the computation, and pointed out that the classical notions of entropy and its growth do not make sense in deterministically computed universes.

In 1992 Zuse started his last project—the Helix-Tower (see the lower image), a variable height tower, for catching wind in order to produce energy in an easier way, build from uniformly shaped and repeatable elements. The propeller and wind generator had to be mounted on the top of the tower. Zuse used a very elegant mechanical construction and immediately received a patent for this in 1993. The height of the tower could be modified by adding or subtracting building blocks.

Konrad Zuse with the project of his Helix-Tower (Source: http://www.epemag.com/zuse)

Konrad Zuse must be credited (alone or with other inventors) for the following pioneering achievements in the computer science:

1. The use of the binary number system for numbers and circuits.

2. The use of floating point numbers, along with the algorithms for the translation between binary and decimal and vice versa.

3. The carry look-ahead circuit for the addition operation and program look-ahead (the program is read two instructions in advance, and it is tested to see whether memory instructions can be performed ahead of time).

4. The world’s first complete high-level language (Plankalkül).

This remarkable man, Konrad Zuse, died from a heart attack on 18 December, 1995, in Hünfeld, Germany.